[Individual Task] Computer Vision Implementation

Implementation of Robust Photometric Stereo, Structure From Motion, Supervised Depth Refinement

In GIST Computer Vision Course (EC4216), there were 3 individual coding assignment: (i) Robust Photometric Stereo, (ii) Structure From Motion, (iii) Supervised Depth Refinement.

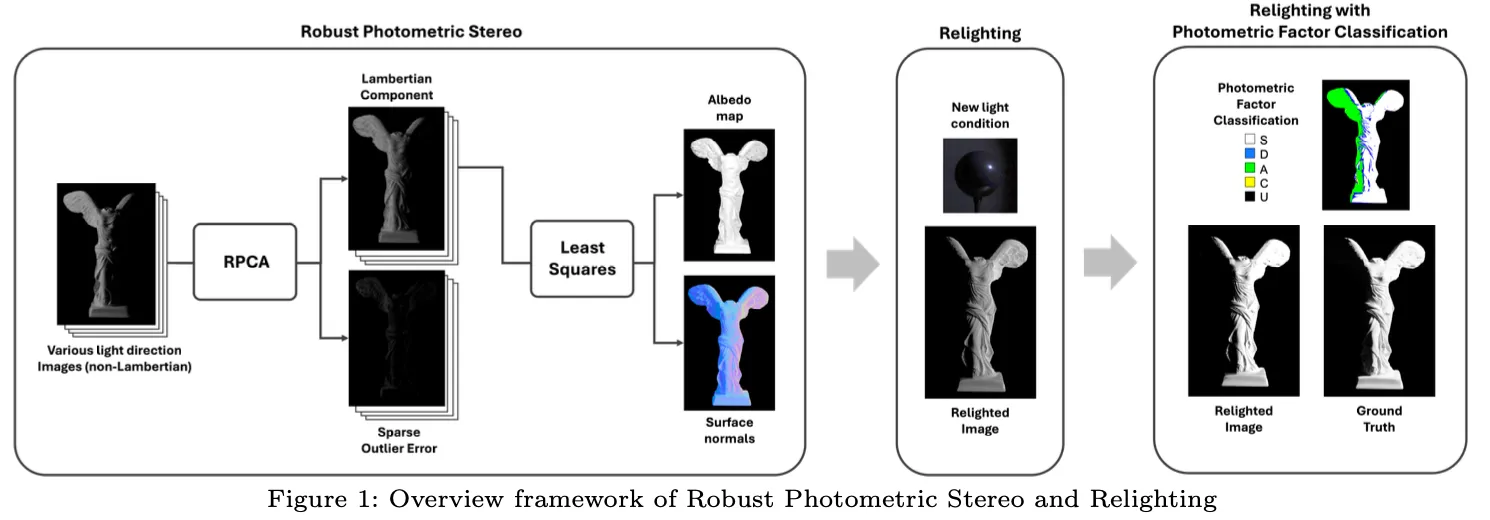

1. Robust Photometric Stereo

Robust Photometric Stereo with RPCA(Robust Principle Component Analysis) and photometric factor weighting for accurate surface normal estimation and relighting.

In this project, I implemented a Robust Photometric Stereo pipeline to precisely estimate the 3D surface information of objects from multiple images. First, assuming that objects follow Lambertian reflectance properties, I implemented the traditional Photometric Stereo method based on Least Squares. Next, to effectively handle non-Lambertian outliers such as shadows and highlights that may occur in real-world environments, I applied Robust Principal Component Analysis (RPCA) to build a Robust Photometric Stereo approach.

The detailed code and report(KR) can be found in the link.

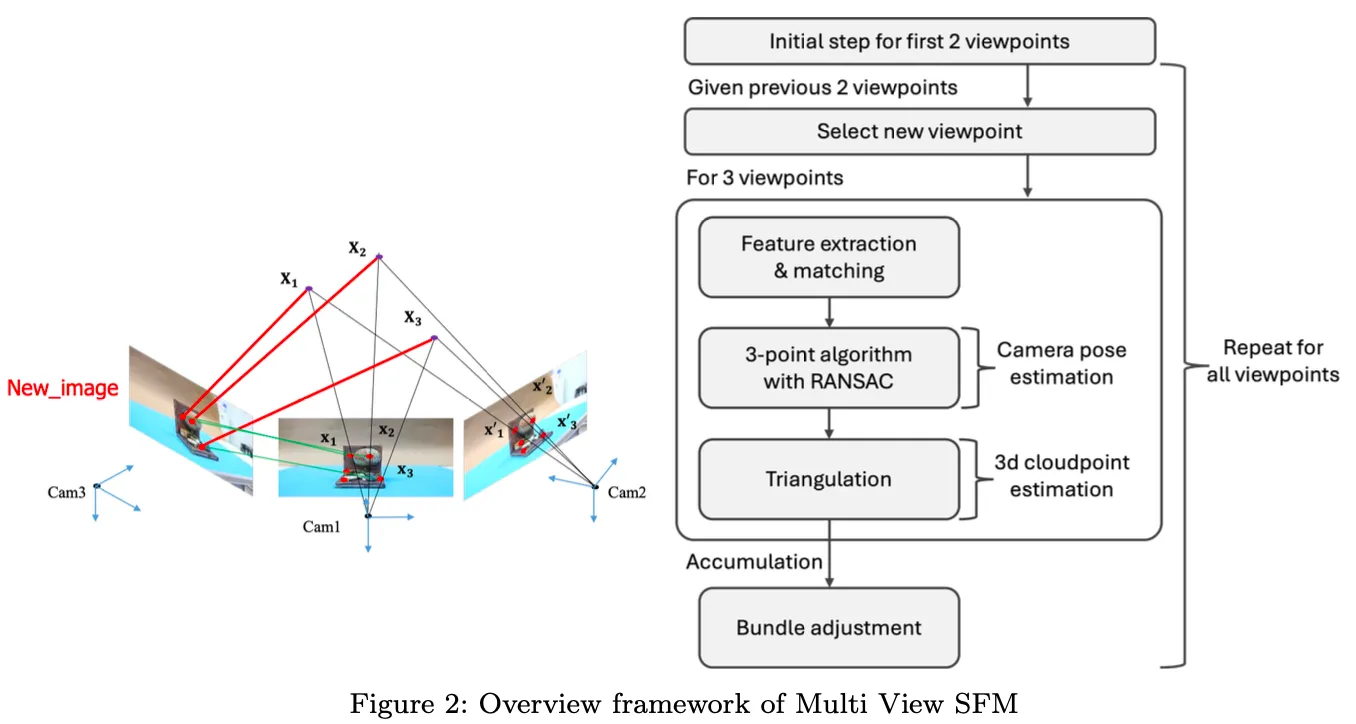

2. Structure From Motion

Structure-from-Motion with two-view and multi-view reconstruction using feature matching, triangulation, and bundle adjustment.

In this project, I implemented Structure From Motion (SfM) to reconstruct the 3D structure of objects from images taken at multiple viewpoints. First, I performed Two-View SfM using the 5-point algorithm on the initial two viewpoints. Then, for additional viewpoints, I applied the 3-point algorithm along with bundle adjustment to perform Multi-View SfM.

In each SfM stage, the point algorithms were used to estimate the camera pose, where RANSAC was employed to select the most reliable estimates. Once the camera poses were extracted, we applied triangulation on the matching points across images to obtain 3D cloud points.

The detailed code and report(KR) can be found in the link.

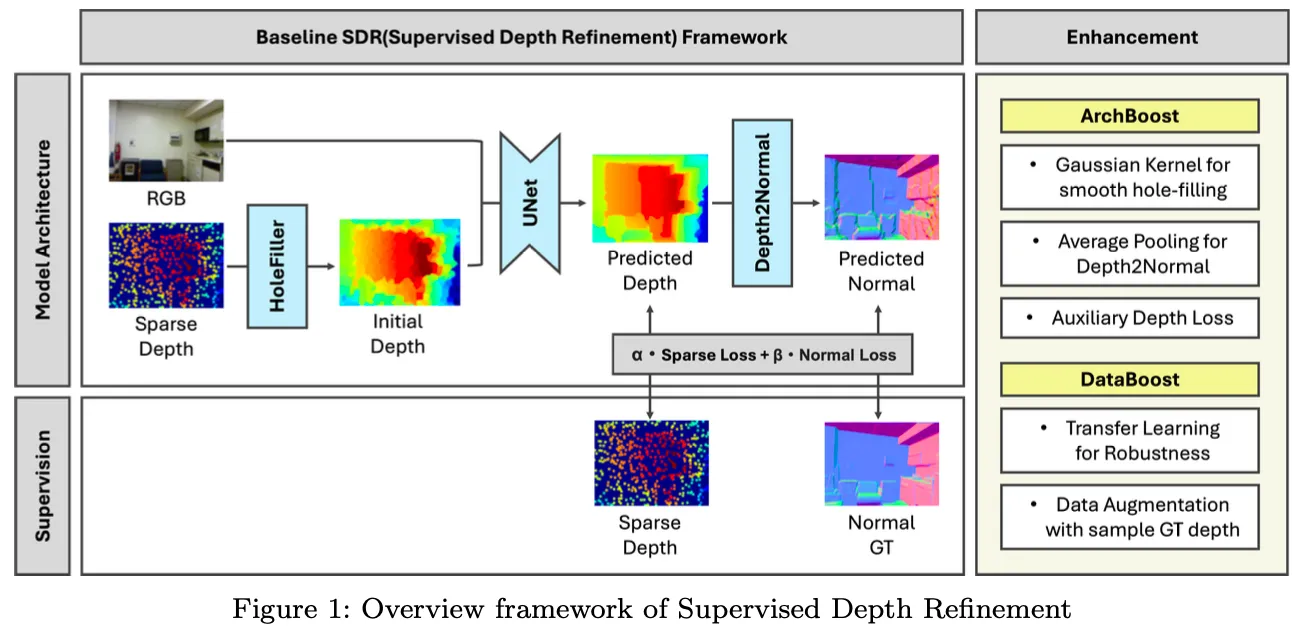

3. Supervised Depth Refinement

Supervised Depth Refinement with UNet, architecture boosts, and data augmentation for accurate depth completion.

In this project, I implemented Supervised Depth Refinement (SDR) to accurately predict complete depth from the given Sparse Depth, RGB Image, and Surface Normal. The SDR model takes sparse depth and RGB as input and outputs depth and normal, where the model learns weights under the supervision of Sparse Ground Truth and Normal Ground Truth to perform depth refinement.

The baseline model consists of HoleFiller, UNet, and Depth2Normal modules, among which only UNet is trainable. It is trained to minimize Sparse depth loss and Normal loss. To improve the performance of the baseline model, I additionally designed and applied two boosting strategies: ArchBoost (Architecture Boost) and DataBoost (Data-driven Boost).

The detailed code and report(KR) can be found in the link.